This changes everything

Building brands, products and innovation processes in the age of generative AI.

I wanted to give the hype a moment to die down before writing about generative AI. The nascent technology seems to have taken the ‘most written about’ mantle from web3 in 2022, punctured only by moments like FTX’s insolvency. In this post, I want to explore how generative AI is starting to shape brand building. From developing brand strategy, to conceptual ideation, to content production, we’re already beginning to see it employed across a variety of use cases. We’ll also look at what the future may hold. Naturally, for writing this post, I’m using Lex, an AI writing tool.

Firstly, it’s worth clarifying what makes ‘generative AI’ generative. Let’s start with a broad definition. Generative AI is a subset of machine learning that deals with the generation of new data that is similar to, but not exactly the same as, the training data. So, if you were to train a generative model on a bunch of images of cats, the model would learn the general features of a cat (e.g. four legs, fur, whiskers, etc.) and would be able to generate new images of cats that look like cats.

Although only recently in the spotlight, the field has been around for a couple of decades. Progress accelerated in 2015 and the Cambrian explosion we’re seeing today is a result of OpenAI’s language model, GPT-3, which has overtaken the standards of human performance in language, writing, speech, reading and image recognition.

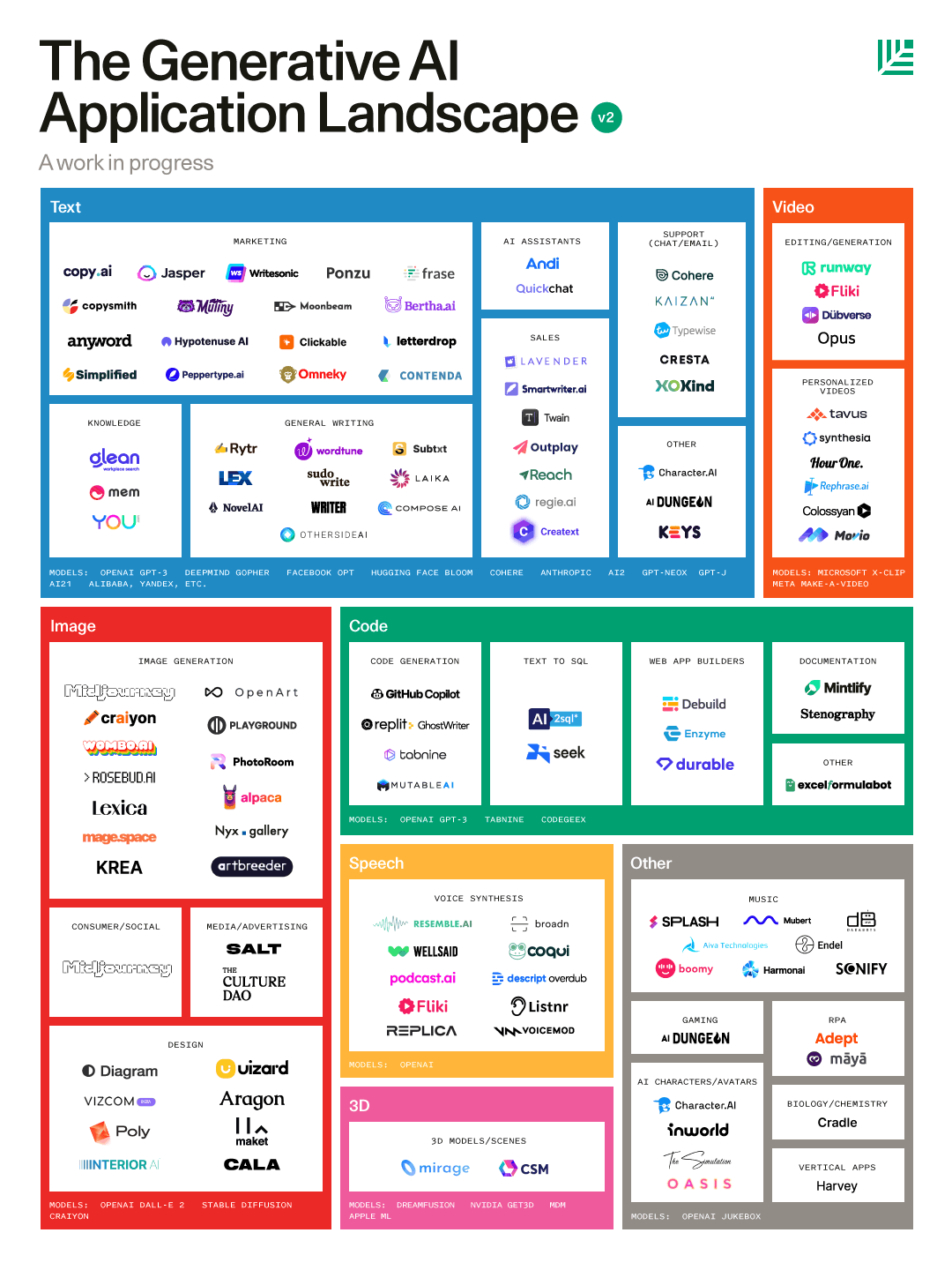

GPT-3 is, in essence, an infrastructure on top of which Generative AI applications are built. Model layers, as they’re known, span across verticals such as text, code, image, etc. As Bessemer states:

Language models are progressively becoming the cognitive structure of real-world AI. And we’re witnessing a promising network effect—improvements in large language models tend to flow into downstream tasks and multi-modal models that span text, video, audio, image, code, and beyond. The advances are snowballing and we are approaching an inflection point.

This proliferation across verticals has driven a venture hype cycle, not dissimilar to what we saw with web3. We’re seeing eyebrow raising rounds being raised, such as that for Stability AI’s Stable Diffusion, which raised a $101 million seed round, led by Lightspeed, for a reported $1 billion valuation. Jasper announced a $125 million Series A led by Insight Partners at a $1.5 billion valuation. Sam Altman’s AI powerhouse OpenAI led an investment in San Francisco-based AI video and audio editing tool Descript at a $500 million-plus valuation. This is all while valuations for early stage companies in 2022 have decreased. Overall funding in the space, however, is down - not unexpected given the market conditions.

Despite this decrease, VC firms such as Bessemer Venture Partners are poised for growth:

Today, less than 1% of online content is generated using AI. Within the next ten years, we predict that at least 50% of online content will be generated by or augmented by AI.

Today, we’re already seeing a highly competitive landscape. This will have a meaningful and lasting impact on how we conceptualise, how we approach problem solving, our creative process flows and how we create content. This will irrevocably change how we shape brands — from their strategic direction to the design of their tech to their communications. Let’s take a look at some examples. There’s so much to cover that each section could be its own post. We’ll keep things purposefully high level so we can cover a lot more ground.

Below, I’ll cover some of the use cases that we’re seeing across the generative AI landscape that can be applied across the following areas of brand building:

Strategic development

Conceptual ideation

Digital product creation

Content production

1. Strategic Development

Strategic activities can vary drastically, covering ground such as: brand strategy, experience strategy, product strategy, business / commercial strategy, innovation design or creative strategy.

These activities require research, analysis and synthesis of various datasets, both internal and external. Some quantitative, others qualitative. These activities are exercises in collecting data points, connecting them in ways that haven’t been done before in order to decide on the most appropriate way forward.

AI aiding research

Glean is “intelligent enterprise search software” that connects all of a company’s various data sources (e.g Google Drive, Figma, Dropbox, etc) and allows a user to search for relevant information. That information is personalised to the user, based on their role, who they work with and their search history.

Aylien is a news aggregator that uses Natural Language Processing to curate specific articles from over 2m news articles daily, tailored to specific companies, categories or keyword search terms.

Both of these tools can be used as a way to target and extract highly relevant data, internally and externally, for a researcher’s needs.

AI aiding strategic exploration

AI writing tools such as Lex, Jasper or CopyAI are being used by writers to overcome writer’s block, providing suggestions about possible next sentences or paragraphs based on what has already been written (and in the same style, no less). It’s like autocomplete on steroids. This presents an opportunity for strategists, should they need to explore different positioning territories, brand strategies, research themes, etc.

I ran some experiments with real-world brand strategies I’ve worked on, using Lex to determine how effective this sort of approach could be. These are under NDA, so I can’t provide details. The experiments involved plugging in a brand’s vision, mission and purpose statements then exploring ways to get somewhat useful territories to continue exploring.

What became clear is that the program really struggled to output anything meaningful if it didn’t have a good example territory to work off. But, if you provide a model for it to reference (for example, a territory heading + paragraph write up), it does a better job. I didn’t find the output groundbreaking and often it was a little repetitive. That said, it could be a great way to surface strategic options quickly and perhaps provide some surprising alternatives.

I hold great hopes for the future of AI-powered text generation in strategic development. It’s not there today, but as the technology develops, there’s great promise for it to be more conceptual rather than derivative. As Rob Toewes states:

AI-powered text generation will create many orders of magnitude more value than will AI-powered image generation in the years ahead. Machines’ ability to generate language—to write and speak—will prove to be far more transformative than their ability to generate visual content… Language is humanity’s single most important invention. More than anything else, it is what sets us apart from every other species on the planet. Language enables us to reason abstractly, to develop complex ideas about what the world is and could be, to communicate these ideas to one another, and to build on them.

AI aiding analysis & synthesis

Momentive uses an AI engine fueled by 55 billion questions answered on their platform. Combined with bespoke research for any given brand, it can combine the research with its wider database, surfacing insights, trends and sentiments whilst being tailored to specific industries, companies or roles.

2. Conceptual Ideation

AI aiding innovation design

The Design Thinking process (or any innovation design equivalent) starts with articulating a problem to solve from the customer’s perspective. It then requires ideation around possible solutions, prototyping and iteration. Solutions and their prototypes could be anything: a paper model, a video, an app design. Generative AI opens up possibilities throughout this process, from low to high fidelity. AI writing tools can help generate initial ideas. Text to image tools such as Dalle-E, Midjourney or Stable Diffusion can bring concepts to life visually, such as the Avacodo Chair concept shown below. This could be helpful for providing inspiration for industrial design, for example.

AI aiding creative concepting

We now have more tools and more shortcuts to bring peoples’ imaginations to life, accurately. Obviously, AI writing tools will give copywriters increased efficiency, possibly new language or narrative threads and help them overcome writer’s block.

Text-to-image applications will be increasingly used to help with concepting in games, films, the metaverse, on-stage set design, interior design and even architecture. Simple user prompts will surface multiple variations of an environment, landscape or building. As Parametric Architecture notes:

AI will become crucial for architects in creating more innovative designs in the physical world. It will allow architects greater efficiency, expand creativity and imagination in the design process, and aid in advancing our traditional construction technology and methodology.

Storyboarding scenes will become highly efficient as well as iterations on environments, characters and objects become more attuned.

These tools will also have an impact on casting and wardrobe, by providing accurate representations of required talent and their outfits. This will also help animators speed up their exploratory character work.

Beyond the writing tools and text-to-image applications mentioned already creatives can leverage text-to-video programs like Meta’s Make-A-Video or Google’s Imagen to create short videos. These are a bit more rudimentary for now, but expect to see a lot of progress in this vertical.

3. Digital Product Creation

AI aiding UI design

Still nascent, this branch of generative AI pulls can help streamline the design process, from Components’ colour explorer, which helps with defining an overall colour palette, to Diagram’s Figma task automator, which rapidly executes tedious design tasks.

AI aiding development

GitHub Copilot is a text-to-code AI tool, which uses OpenAI to suggest code in real time. It’s currently in hot water, being sued over copyright infringement. We’ll discuss IP rights further when we touch on the ethical implications of generative AI.

Lizzy Lawrence’s article on Protocol provided a succinct encapsulation of Copilot:

The goal is to replace the boring part of coding. Instead of going to Stack Overflow or Quora or Google to find a basic coding solution, Copilot grabs it for you. You press tab to accept the suggestion, or keep typing to ignore. “I can delegate some tasks that I just don’t want to do,” Galiatin said. “It saves a lot of mental energy and a lot of time.”

GitHub conducted a study of over 2000 programmers using Copilot. It found that “60–75% of developers feel more fulfilled with their job, feel less frustrated when coding, and can focus on more satisfying work” and that “More than 90% of respondents reported that Copilot helps them complete tasks faster”.

This sort of tech will drastically reduce not only prototyping time, but will improve the timelines (and therefore reduce costs) for full builds, especially those which leverage a lot of standard code.

4. Content Production

Image production

The opportunity here is clear. Reduction in time and production costs will be a massive driver in shifting towards a leniency on generative AI across illustration, 3D artworks, patterns and photography. The transition likely won’t hit immediately, as legacy processes continue to hold and prompt craft slowly improves.

Creative Optimisation

This will be particularly relevant for creative assets that require variants (e.g. for audience targeting, media placement optionality, or A/B testing). Generative AI will allow for headlines and copy options to be created en masse. And for static or video imagery variations to be explored.

Voiceovers

Voiceovers have been a staple of any content production. But it can be a lengthy, cumbersome and potentially expensive process. Enter companies like play.ht who can create voices. These voices are uncannily realistic. They’ll soon be widespread in advertising, internal videos and educational content. In addition, these companies are also able to clone voices from existing humans, which you may have heard about when play.ht created the Steve Jobs x Joe Rogan fictional podcast:

Video Production & Editing

We’ve already covered Google and Meta’s text-to-video applications. As these progress, I’m sure we’ll see them being utilised at scale on their advertising platforms (and elsewhere).

Companies like Movio are geared up for businesses to build videos in a pick-n-mix kind of way, with deep fake-esque talking heads, backgrounds and more. Runway leans more into the craft end of the spectrum, helping editors to refine content with the assistance of AI. This could be replacing textures, text-to-colour grade, replacing backgrounds and much more.

I expect these applications will gain traction in larger productions as the technology improves and we become more proficient with the tools.

Ethical Implications

It would be remiss not to talk about the ethical implications around generative AI. I don’t plan on deep diving into this topic, as it’s fraught with complexity.

In brand and product building one of the highest order concerns is around copyright. Large language models (like GPT-3 and its equivalents) and their dependent applications rely on scraping of content (such as images, text or code) from public repositories to train the AI systems.

Patrick Kulp from Adweek has a thorough write up about what considerations brands and agencies should be thinking about. I encourage anyone stepping into the practical implementation of these tools to give that a read.

Connected, but somewhat different is the matter of attribution. AI is indiscriminate with what is mashes together to create something new. That is true of imagery, video and code. The fragmented nature of it is allowing Generative AI companies to operate outside of any prevailing law at the moment. Packy had a particularly insightful take on how this could be overcome in due course:

I also have a thesis that AI will be web3’s ultimate best use case. People will need a way to own, permission, and benefit from their data as it feeds increasingly powerful models, and if open AI wins, decentralized ownership and governance of those models will be critically important. Looking back in a decade or so, I wouldn’t be surprised if we viewed all of web3’s early stumbles and mistakes as necessary experiments for the main event: governing AI in such a way that it doesn’t turn us all into paperclips, owning enough of it that we benefit from its use of our data, and rewarding open source contributors for their work.

Authenticity is another key consideration. Deep fakes are almost indistinguishable from reality. We recently saw the news that Bruce Willis, to his surprise, appeared in a Russian ad made by Deepcake, which showed him tied to a bomb on the back of a yacht. The company claimed Willis sold his performance rights, which the actor’s representatives deny. Legal proceedings continue.

What impact generative AI will have on the workforce depends on who you ask. Carson Grubaugh, an artist, paints a bleak picture in this Forbes article:

“Concept artists, character designers, backgrounds, all that stuff is gone. As soon as the creative director realizes they don’t need to pay people to produce that kind of work, it will be like what happened to darkroom techs when Photoshop landed, but on a much larger scale.”

Regulatory measures or legal constraints due to IP rights may impede the erosion (or at least the pace of it) to some extent, but it’s becoming increasingly clear that these tools will have a significant impact.

And tools they are. Adobe sees Generative AI as a creative assistant and are already building the tools into their platform, with IP considerations being front and centre.

We may also see the rise of new design or creative disciplines such as “prompt engineering”. Domain experts in a new field like this will be in short supply, so I expect will be in high demand.

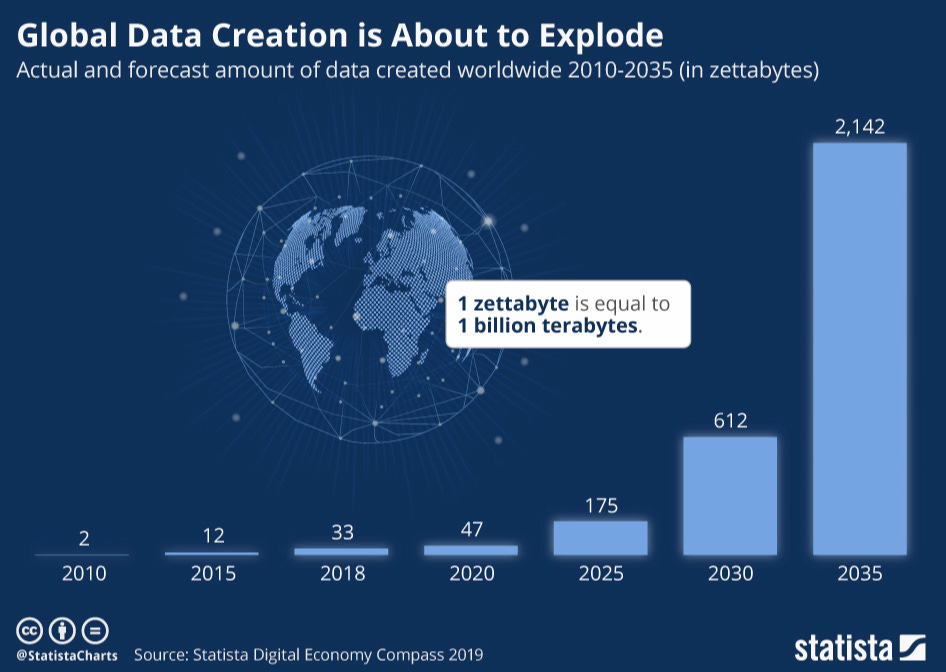

Finally, a word on data. This isn’t something we consider much. But how much processing power and data storage needed for generative AI is a real cause for concern. It may even accelerate the below chart. Today, the data infrastructure industry accounts for roughly 4% of the world’s emissions (about the same as the entire aviation industry). Ten years from now, that could look very different.

Adoption

The allure of Generative AI is that it is so flexible in how it’s used. It can act as a strategic thought partner, an imagination machine or an efficiency hack. It’ll have an impact on the content of our work as well as how that work gets done.

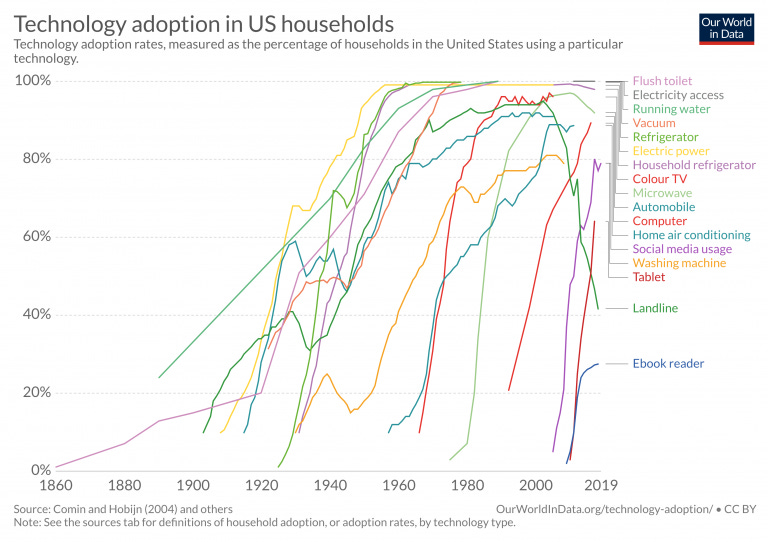

The pace of adoption for technologies continues to accelerate over time and Generative AI will be no different. Brands, consultancies, agencies and production houses ought to keep pace with the development in this space, or they could find themselves struggling to maintain parity levels of speed and cost with their competitors.

Is this a good thing for the creative industries? I really don’t think that’s the right question to ask. The more relevant question to ask is “how can we ensure we design this sector to improve the creative industries?”. We’ve seen ‘big tech’ take default ownership of issues in the past with unintended consequences. As we see some of the potential implications of Generative AI, the prerogative sits with businesses today to understand the technology, get heavily involved in using, building and regulating Generative AI for the betterment of the industries it’ll impact.

Sam, this is such a great article. You did an awesome job of distilling a lot of this information down, as well as providing great examples and reference articles to dig into the subject more. I thoroughly enjoyed reading it.